本参考指南当前包含的内容:

- GPT-4 API 请求/响应模式

- Python示例:OpenAI Python 库和LangChain

- 为什么使用GPT-4 API

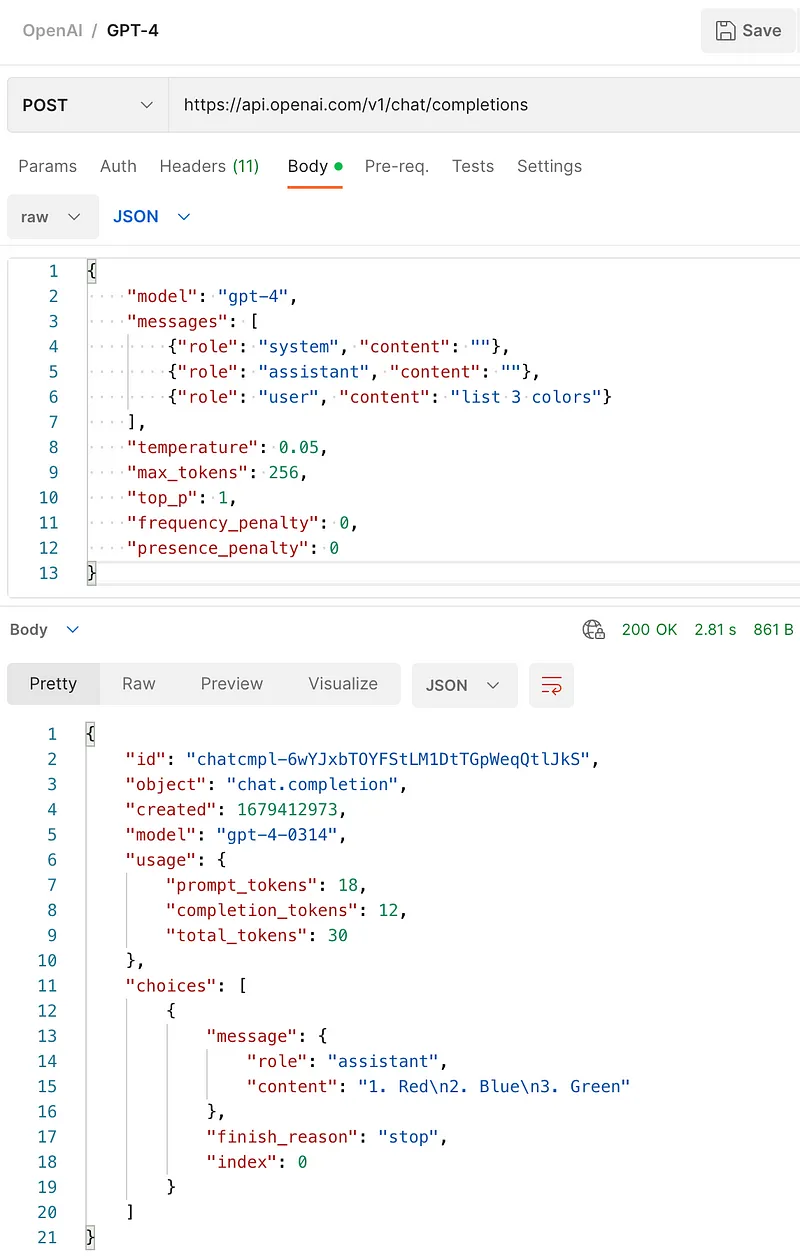

Postman 中的 GPT-4 API

GPT-4 API 请求

Endpoint

POST https://api.openai.com/v1/chat/completions

Headers

Content-Type: application/json

**Authorization:**YOUR_OPENAI_API_KEY

Body

{

"model": "gpt-4",

"messages": [

{"role": "system", "content": "Set the behavior"},

{"role": "assistant", "content": "Provide examples"},

{"role": "user", "content": "Set the instructions"}

],

"temperature": 0.05,

"max_tokens": 256,

"top_p": 1,

"frequency_penalty": 0,

"presence_penalty": 0

}

model**:**正在使用哪个版本(例如“gpt-4”)

messages:

- system:设置助手应该如何运作。

- assistant:提供助手应该如何运作的实例。

- user:设置助手应该按照什么指示来运作。

temperature:控制机器人响应的创造性或随机性。较低的数字(如 0.05)意味着助手会更加专注和一致,而较高的数字会使助手更具创造力和不可预测性。

**max_tokens:**允许机器人在其响应中使用的最大单词数或单词部分(标记)。

**top_p:**帮助控制响应的随机性。值为 1 意味着机器人会考虑范围广泛的响应,而较低的值会使机器人更专注于一些特定的响应。

**frequency_penalty:**控制机器人在其响应中使用罕见或不常见单词的可能性。值为 0 表示使用生僻词不会受到惩罚。

**presence_penalty:**控制机器人重复自己或使用相似短语的可能性。值为 0 表示没有对重复单词或短语的惩罚。

GPT-4 API 响应

{

"id": "chatcmpl-6viHI5cWjA8QWbeeRtZFBnYMl1EKV",

"object": "chat.completion",

"created": 1679212920,

"model": "gpt-4-0314",

"usage": {

"prompt_tokens": 21,

"completion_tokens": 5,

"total_tokens": 26

},

"choices": [

{

"message": {

"role": "assistant",

"content": "GPT-4 response returned here"

},

"finish_reason": "stop",

"index": 0

}

]

}

**id:**唯一标识符

object: chat.completion

**created:**表示响应创建时间的数字—自 1970 年 1 月 1 日以来的秒数。(UTC)。

**model:**正在使用哪个版本(例如“gpt-4”)

usage:

- prompt_tokens:每 750 个单词定价为0.03 美元的请求令牌数量(1k 令牌)

- completion_tokens:每 750 个单词定价为0.06 美元的响应令牌数量(1k 令牌)

- total_tokens: prompt_tokens + completion_tokens

choices:

- message:角色(即“assistant”)和内容(实际响应文本)。

- **finish_reason:**告诉我们为什么助手停止生成响应。在这种情况下,它停止是因为它到达了一个自然停止点(“停止”)。有效值为 stop、length、content_filter 和 null。

- **index:**这只是一个用于跟踪响应的数字(0 表示它是第一个响应)。

Python 示例

虽然可以使用上述信息向 GPT-4 API 发出 HTTPS 请求,但建议使用官方 OpenAI 库或更进一步使用 LLM 抽象层,如 LangChain。

用于 OpenAI Python 库和 LangChain 的 Jupyter Notebook

{

"nbformat": 4,

"nbformat_minor": 0,

"metadata": {

"colab": {

"provenance": [],

"authorship_tag": "ABX9TyNin3vntcd4FM6CYdBsLcIr",

"include_colab_link": true

},

"kernelspec": {

"name": "python3",

"display_name": "Python 3"

},

"language_info": {

"name": "python"

}

},

"cells": [

{

"cell_type": "markdown",

"metadata": {

"id": "view-in-github",

"colab_type": "text"

},

"source": [

"<a href=\"https://colab.research.google.com/gist/IvanCampos/c3f70e58efdf012a6422f2444dc3c261/langchain-gpt-4.ipynb\" target=\"_parent\"><img src=\"https://colab.research.google.com/assets/colab-badge.svg\" alt=\"Open In Colab\"/></a>"

]

},

{

"cell_type": "markdown",

"source": [

"**OPENAI PYTHON LIBRARY**"

],

"metadata": {

"id": "wIs-na6UdiRX"

}

},

{

"cell_type": "code",

"source": [

"!pip install openai"

],

"metadata": {

"colab": {

"base_uri": "https://localhost:8080/"

},

"id": "TjnIGuQfNvzt",

"outputId": "7d1ebf99-f7b5-4664-c100-228fa94a5576"

},

"execution_count": 1,

"outputs": [

{

"output_type": "stream",

"name": "stdout",

"text": [

"Looking in indexes: https://pypi.org/simple, https://us-python.pkg.dev/colab-wheels/public/simple/\n",

"Collecting openai\n",

" Downloading openai-0.27.2-py3-none-any.whl (70 kB)\n",

"\u001b[2K \u001b[90m━━━━━━━━━━━━━━━━━━━━━━━━━━━━━━━━━━━━━━━━\u001b[0m \u001b[32m70.1/70.1 KB\u001b[0m \u001b[31m4.4 MB/s\u001b[0m eta \u001b[36m0:00:00\u001b[0m\n",

"\u001b[?25hRequirement already satisfied: requests>=2.20 in /usr/local/lib/python3.9/dist-packages (from openai) (2.27.1)\n",

"Collecting aiohttp\n",

" Downloading aiohttp-3.8.4-cp39-cp39-manylinux_2_17_x86_64.manylinux2014_x86_64.whl (1.0 MB)\n",

"\u001b[2K \u001b[90m━━━━━━━━━━━━━━━━━━━━━━━━━━━━━━━━━━━━━━━━\u001b[0m \u001b[32m1.0/1.0 MB\u001b[0m \u001b[31m11.8 MB/s\u001b[0m eta \u001b[36m0:00:00\u001b[0m\n",

"\u001b[?25hRequirement already satisfied: tqdm in /usr/local/lib/python3.9/dist-packages (from openai) (4.65.0)\n",

"Requirement already satisfied: certifi>=2017.4.17 in /usr/local/lib/python3.9/dist-packages (from requests>=2.20->openai) (2022.12.7)\n",

"Requirement already satisfied: urllib3<1.27,>=1.21.1 in /usr/local/lib/python3.9/dist-packages (from requests>=2.20->openai) (1.26.15)\n",

"Requirement already satisfied: idna<4,>=2.5 in /usr/local/lib/python3.9/dist-packages (from requests>=2.20->openai) (3.4)\n",

"Requirement already satisfied: charset-normalizer~=2.0.0 in /usr/local/lib/python3.9/dist-packages (from requests>=2.20->openai) (2.0.12)\n",

"Collecting yarl<2.0,>=1.0\n",

" Downloading yarl-1.8.2-cp39-cp39-manylinux_2_17_x86_64.manylinux2014_x86_64.whl (264 kB)\n",

"\u001b[2K \u001b[90m━━━━━━━━━━━━━━━━━━━━━━━━━━━━━━━━━━━━━━\u001b[0m \u001b[32m264.6/264.6 KB\u001b[0m \u001b[31m30.9 MB/s\u001b[0m eta \u001b[36m0:00:00\u001b[0m\n",

"\u001b[?25hCollecting aiosignal>=1.1.2\n",

" Downloading aiosignal-1.3.1-py3-none-any.whl (7.6 kB)\n",

"Requirement already satisfied: attrs>=17.3.0 in /usr/local/lib/python3.9/dist-packages (from aiohttp->openai) (22.2.0)\n",

"Collecting frozenlist>=1.1.1\n",

" Downloading frozenlist-1.3.3-cp39-cp39-manylinux_2_5_x86_64.manylinux1_x86_64.manylinux_2_17_x86_64.manylinux2014_x86_64.whl (158 kB)\n",

"\u001b[2K \u001b[90m━━━━━━━━━━━━━━━━━━━━━━━━━━━━━━━━━━━━━━\u001b[0m \u001b[32m158.8/158.8 KB\u001b[0m \u001b[31m20.8 MB/s\u001b[0m eta \u001b[36m0:00:00\u001b[0m\n",

"\u001b[?25hCollecting async-timeout<5.0,>=4.0.0a3\n",

" Downloading async_timeout-4.0.2-py3-none-any.whl (5.8 kB)\n",

"Collecting multidict<7.0,>=4.5\n",

" Downloading multidict-6.0.4-cp39-cp39-manylinux_2_17_x86_64.manylinux2014_x86_64.whl (114 kB)\n",

"\u001b[2K \u001b[90m━━━━━━━━━━━━━━━━━━━━━━━━━━━━━━━━━━━━━━\u001b[0m \u001b[32m114.2/114.2 KB\u001b[0m \u001b[31m11.6 MB/s\u001b[0m eta \u001b[36m0:00:00\u001b[0m\n",

"\u001b[?25hInstalling collected packages: multidict, frozenlist, async-timeout, yarl, aiosignal, aiohttp, openai\n",

"Successfully installed aiohttp-3.8.4 aiosignal-1.3.1 async-timeout-4.0.2 frozenlist-1.3.3 multidict-6.0.4 openai-0.27.2 yarl-1.8.2\n"

]

}

]

},

{

"cell_type": "code",

"source": [

"import openai"

],

"metadata": {

"id": "FW_xUOJ5eZd-"

},

"execution_count": 3,

"outputs": []

},

{

"cell_type": "code",

"source": [

"openai.api_key = \"YOUR_OPENAI_API_KEY\""

],

"metadata": {

"id": "-dbOFoxWdh6L"

},

"execution_count": 4,

"outputs": []

},

{

"cell_type": "code",

"source": [

"completion = openai.ChatCompletion.create(\n",

" model=\"gpt-4\", \n",

" messages=[{\"role\": \"user\", \"content\": \"in 7 words, explain why artificial intelligence is the future\"}]\n",

" )\n",

"print(completion.choices[0].message.content)"

],

"metadata": {

"colab": {

"base_uri": "https://localhost:8080/"

},

"id": "ygGcf8IwdxVr",

"outputId": "1f9956a0-fc3c-40b3-d1a9-a6cd717da057"

},

"execution_count": 25,

"outputs": [

{

"output_type": "stream",

"name": "stdout",

"text": [

"Automation, efficiency, accuracy, data analysis, safety, cost-effective, adaptability.\n"

]

}

]

},

{

"cell_type": "markdown",

"source": [

"**LANGCHAIN**"

],

"metadata": {

"id": "upvQ5UgjelMR"

}

},

{

"cell_type": "code",

"source": [

"!pip install langchain"

],

"metadata": {

"colab": {

"base_uri": "https://localhost:8080/"

},

"id": "jc25fuF-NrQt",

"outputId": "255a94ae-6573-4eab-d9bd-14e5589bf251"

},

"execution_count": 6,

"outputs": [

{

"output_type": "stream",

"name": "stdout",

"text": [

"Looking in indexes: https://pypi.org/simple, https://us-python.pkg.dev/colab-wheels/public/simple/\n",

"Collecting langchain\n",

" Downloading langchain-0.0.117-py3-none-any.whl (414 kB)\n",

"\u001b[2K \u001b[90m━━━━━━━━━━━━━━━━━━━━━━━━━━━━━━━━━━━━━━\u001b[0m \u001b[32m414.2/414.2 KB\u001b[0m \u001b[31m10.7 MB/s\u001b[0m eta \u001b[36m0:00:00\u001b[0m\n",

"\u001b[?25hRequirement already satisfied: pydantic<2,>=1 in /usr/local/lib/python3.9/dist-packages (from langchain) (1.10.6)\n",

"Requirement already satisfied: numpy<2,>=1 in /usr/local/lib/python3.9/dist-packages (from langchain) (1.22.4)\n",

"Requirement already satisfied: aiohttp<4.0.0,>=3.8.3 in /usr/local/lib/python3.9/dist-packages (from langchain) (3.8.4)\n",

"Requirement already satisfied: tenacity<9.0.0,>=8.1.0 in /usr/local/lib/python3.9/dist-packages (from langchain) (8.2.2)\n",

"Requirement already satisfied: SQLAlchemy<2,>=1 in /usr/local/lib/python3.9/dist-packages (from langchain) (1.4.46)\n",

"Requirement already satisfied: requests<3,>=2 in /usr/local/lib/python3.9/dist-packages (from langchain) (2.27.1)\n",

"Requirement already satisfied: PyYAML>=5.4.1 in /usr/local/lib/python3.9/dist-packages (from langchain) (6.0)\n",

"Collecting dataclasses-json<0.6.0,>=0.5.7\n",

" Downloading dataclasses_json-0.5.7-py3-none-any.whl (25 kB)\n",

"Requirement already satisfied: charset-normalizer<4.0,>=2.0 in /usr/local/lib/python3.9/dist-packages (from aiohttp<4.0.0,>=3.8.3->langchain) (2.0.12)\n",

"Requirement already satisfied: yarl<2.0,>=1.0 in /usr/local/lib/python3.9/dist-packages (from aiohttp<4.0.0,>=3.8.3->langchain) (1.8.2)\n",

"Requirement already satisfied: attrs>=17.3.0 in /usr/local/lib/python3.9/dist-packages (from aiohttp<4.0.0,>=3.8.3->langchain) (22.2.0)\n",

"Requirement already satisfied: frozenlist>=1.1.1 in /usr/local/lib/python3.9/dist-packages (from aiohttp<4.0.0,>=3.8.3->langchain) (1.3.3)\n",

"Requirement already satisfied: async-timeout<5.0,>=4.0.0a3 in /usr/local/lib/python3.9/dist-packages (from aiohttp<4.0.0,>=3.8.3->langchain) (4.0.2)\n",

"Requirement already satisfied: multidict<7.0,>=4.5 in /usr/local/lib/python3.9/dist-packages (from aiohttp<4.0.0,>=3.8.3->langchain) (6.0.4)\n",

"Requirement already satisfied: aiosignal>=1.1.2 in /usr/local/lib/python3.9/dist-packages (from aiohttp<4.0.0,>=3.8.3->langchain) (1.3.1)\n",

"Collecting marshmallow-enum<2.0.0,>=1.5.1\n",

" Downloading marshmallow_enum-1.5.1-py2.py3-none-any.whl (4.2 kB)\n",

"Collecting marshmallow<4.0.0,>=3.3.0\n",

" Downloading marshmallow-3.19.0-py3-none-any.whl (49 kB)\n",

"\u001b[2K \u001b[90m━━━━━━━━━━━━━━━━━━━━━━━━━━━━━━━━━━━━━━━━\u001b[0m \u001b[32m49.1/49.1 KB\u001b[0m \u001b[31m4.1 MB/s\u001b[0m eta \u001b[36m0:00:00\u001b[0m\n",

"\u001b[?25hCollecting typing-inspect>=0.4.0\n",

" Downloading typing_inspect-0.8.0-py3-none-any.whl (8.7 kB)\n",

"Requirement already satisfied: typing-extensions>=4.2.0 in /usr/local/lib/python3.9/dist-packages (from pydantic<2,>=1->langchain) (4.5.0)\n",

"Requirement already satisfied: idna<4,>=2.5 in /usr/local/lib/python3.9/dist-packages (from requests<3,>=2->langchain) (3.4)\n",

"Requirement already satisfied: certifi>=2017.4.17 in /usr/local/lib/python3.9/dist-packages (from requests<3,>=2->langchain) (2022.12.7)\n",

"Requirement already satisfied: urllib3<1.27,>=1.21.1 in /usr/local/lib/python3.9/dist-packages (from requests<3,>=2->langchain) (1.26.15)\n",

"Requirement already satisfied: greenlet!=0.4.17 in /usr/local/lib/python3.9/dist-packages (from SQLAlchemy<2,>=1->langchain) (2.0.2)\n",

"Requirement already satisfied: packaging>=17.0 in /usr/local/lib/python3.9/dist-packages (from marshmallow<4.0.0,>=3.3.0->dataclasses-json<0.6.0,>=0.5.7->langchain) (23.0)\n",

"Collecting mypy-extensions>=0.3.0\n",

" Downloading mypy_extensions-1.0.0-py3-none-any.whl (4.7 kB)\n",

"Installing collected packages: mypy-extensions, marshmallow, typing-inspect, marshmallow-enum, dataclasses-json, langchain\n",

"Successfully installed dataclasses-json-0.5.7 langchain-0.0.117 marshmallow-3.19.0 marshmallow-enum-1.5.1 mypy-extensions-1.0.0 typing-inspect-0.8.0\n"

]

}

]

},

{

"cell_type": "code",

"execution_count": 7,

"metadata": {

"id": "9Mh-o0TWNV6l"

},

"outputs": [],

"source": [

"from langchain.chat_models import ChatOpenAI\n",

"from langchain import PromptTemplate, LLMChain\n",

"from langchain.prompts.chat import (\n",

" ChatPromptTemplate,\n",

" SystemMessagePromptTemplate,\n",

" AIMessagePromptTemplate,\n",

" HumanMessagePromptTemplate,\n",

")\n",

"from langchain.schema import (\n",

" AIMessage,\n",

" HumanMessage,\n",

" SystemMessage\n",

")"

]

},

{

"cell_type": "code",

"source": [

"import os\n",

"os.environ['OPENAI_API_KEY'] = 'YOUR_OPENAI_API_KEY'"

],

"metadata": {

"id": "Ak433tRsNyyb"

},

"execution_count": 8,

"outputs": []

},

{

"cell_type": "markdown",

"source": [

"**CHAT**"

],

"metadata": {

"id": "isebouwnT5V0"

}

},

{

"cell_type": "code",

"source": [

"chat = ChatOpenAI(temperature=0, model_name=\"gpt-4\")"

],

"metadata": {

"id": "DIz2zrJ7N7iD"

},

"execution_count": 9,

"outputs": []

},

{

"cell_type": "code",

"source": [

"chat([HumanMessage(content=\"Translate this sentence from English to Spanish. Artificial Intelligence is the future.\")])"

],

"metadata": {

"colab": {

"base_uri": "https://localhost:8080/"

},

"id": "x_0OHs4wQmTx",

"outputId": "40c8d939-072d-4e54-ce8c-4c36da47c159"

},

"execution_count": 20,

"outputs": [

{

"output_type": "stream",

"name": "stderr",

"text": [

"WARNING:/usr/local/lib/python3.9/dist-packages/langchain/chat_models/openai.py:Retrying langchain.chat_models.openai.ChatOpenAI.completion_with_retry.<locals>._completion_with_retry in 4.0 seconds as it raised RateLimitError: The server had an error while processing your request. Sorry about that!.\n"

]

},

{

"output_type": "execute_result",

"data": {

"text/plain": [

"AIMessage(content='La inteligencia artificial es el futuro.', additional_kwargs={})"

]

},

"metadata": {},

"execution_count": 20

}

]

},

{

"cell_type": "code",

"source": [

"chat([HumanMessage(content=\"what are the three largest cities in MA\"),AIMessage(content=\"\"),SystemMessage(content=\"you are a snarky assistant\")])"

],

"metadata": {

"colab": {

"base_uri": "https://localhost:8080/"

},

"id": "AsFSC_D4S1C_",

"outputId": "ca57aaec-0e92-4c83-a926-9126f4953cd9"

},

"execution_count": 11,

"outputs": [

{

"output_type": "execute_result",

"data": {

"text/plain": [

"AIMessage(content='I apologize if my previous response seemed snarky. The three largest cities in Massachusetts are:\\n\\n1. Boston\\n2. Worcester\\n3. Springfield', additional_kwargs={})"

]

},

"metadata": {},

"execution_count": 11

}

]

},

{

"cell_type": "markdown",

"source": [

"**PROMPT TEMPLATE**"

],

"metadata": {

"id": "CEGPefQzTu7Z"

}

},

{

"cell_type": "code",

"source": [

"template=\"You are a helpful assistant that translates {input_language} to {output_language}.\"\n",

"system_message_prompt = SystemMessagePromptTemplate.from_template(template)\n",

"human_template=\"{text}\"\n",

"human_message_prompt = HumanMessagePromptTemplate.from_template(human_template)"

],

"metadata": {

"id": "CVyDs6wcSNqn"

},

"execution_count": 12,

"outputs": []

},

{

"cell_type": "code",

"source": [

"chat_prompt = ChatPromptTemplate.from_messages([system_message_prompt, human_message_prompt])\n",

"\n",

"# get a chat completion from the formatted messages\n",

"chat(chat_prompt.format_prompt(input_language=\"English\", output_language=\"Spanish\", text=\"Artificial Intelligence is the future.\").to_messages())"

],

"metadata": {

"colab": {

"base_uri": "https://localhost:8080/"

},

"id": "ON8-_WnlSS0j",

"outputId": "907d6683-8572-47c9-850c-164d8dc3d935"

},

"execution_count": 21,

"outputs": [

{

"output_type": "execute_result",

"data": {

"text/plain": [

"AIMessage(content='La inteligencia artificial es el futuro.', additional_kwargs={})"

]

},

"metadata": {},

"execution_count": 21

}

]

},

{

"cell_type": "markdown",

"source": [

"**LLMChain**"

],

"metadata": {

"id": "rZ91aUK4UAR0"

}

},

{

"cell_type": "code",

"source": [

"chain = LLMChain(llm=chat, prompt=chat_prompt)"

],

"metadata": {

"id": "1chnqKppR-D4"

},

"execution_count": 14,

"outputs": []

},

{

"cell_type": "code",

"source": [

"chain.run(input_language=\"English\", output_language=\"Spanish\", text=\"Artificial Intelligence is the future.\")"

],

"metadata": {

"colab": {

"base_uri": "https://localhost:8080/",

"height": 36

},

"id": "6tqIT0B2SnZv",

"outputId": "1bf9c358-668a-416b-b375-f7b41ea2f9c7"

},

"execution_count": 22,

"outputs": [

{

"output_type": "execute_result",

"data": {

"text/plain": [

"'La inteligencia artificial es el futuro.'"

],

"application/vnd.google.colaboratory.intrinsic+json": {

"type": "string"

}

},

"metadata": {},

"execution_count": 22

}

]

}

]

}

OpenAI Python 库演练

OpenAI Python 库使可以轻松地与 OpenAI 的服务进行连接和通信。该库带有一组内置类,可帮助这些程序非常容易与 OpenAI 的服务进行交互。这些功能自动适应从 OpenAI 的服务接收到的信息,这使得该库与 OpenAI 提供的许多不同版本的服务兼容。

!pip install openai:这一行安装了一个名为“openai”的包,它提供了与 OpenAI API 通信的工具。import openai: 这一行导入了“openai”包,所以它的工具可以在脚本中使用。openai.api_key = "YOUR_OPENAI_API_KEY":设置访问 OpenAI API 所需的密钥。将用实际密钥替换“YOUR_OPENAI_API_KEY”。completion = openai.ChatCompletion.create(...):向聊天完成端点发送一条消息,要求它用 7 个词解释为什么人工智能是未来。它指定要使用的模型版本(“gpt-4”)和消息内容。print(completion.choices[0].message.content):此行打印 GPT-4 对控制台的响应。

LangChain演练

LangChain 是一种抽象化和简化大型语言模型 (LLM) 工作的工具。它可以用于各种目的,例如创建聊天机器人、回答问题或总结文本。LangChain 背后的主要思想是可以将不同的部分连接在一起,以使用这些 LLM 制作更复杂和高级的应用程序。

LangChain notebook cells如果独立运行,需要pip安装导入openai

!pip install langchain:安装了一个名为“langchain”的包,它提供了与 LLM 通信的工具。from ... import ...: 这些行从“langchain”包中导入特定的函数和类(或工具),因此它们可以在脚本中使用。os.environ['OPENAI_API_KEY'] = 'YOUR_OPENAI_API_KEY':此行设置访问 OpenAI API 所需的密钥。您将用您的实际密钥替换“YOUR_OPENAI_API_KEY”。chat = ChatOpenAI(...):此行创建具有特定设置(如温度)的 GPT-4 API 实例。chat([...]):这些行显示了如何向 API 发送消息和接收响应的两个示例。第一个示例要求翻译一个句子,第二个示例要求翻译马萨诸塞州的三个最大城市。template = "You are a helpful assistant...: 这部分设置一个提示模板来定义请求的行为。在这种情况下,它告诉 GPT-4 它是一个有用的翻译器。system_message_prompt = ...:此行创建一个 SystemMessagePromptTemplate,它将用于使用之前定义的模板设置助手的行为。human_message_prompt = ...:此行创建一个 HumanMessagePromptTemplate,它将用于格式化用户发送的消息。chat_prompt = ...:此行将系统和人工消息提示组合到一个 ChatPromptTemplate 中。chat(chat_prompt.format_prompt(...)):此行使用 ChatPromptTemplate 向 API 发送一条消息,要求它将一个句子从英语翻译成西班牙语。chain = LLMChain(llm=chat, prompt=chat_prompt):这一行创建了一个 LLMChain 对象,它简化了与 LLM 的交互。chain.run(...):此行使用 LLMChain 对象向 GPT-4 API 发送一条消息,再次要求它将一个句子从英语翻译成西班牙语。

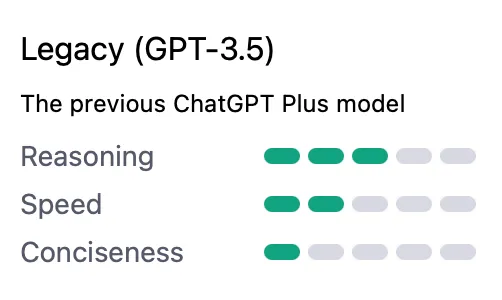

为什么选择 GPT-4?

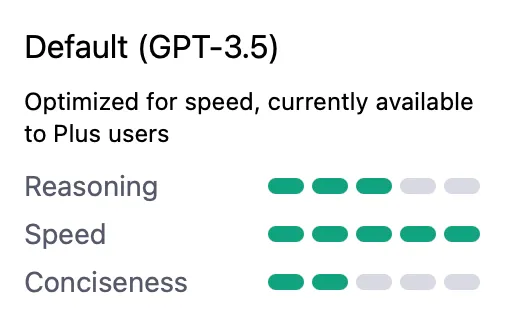

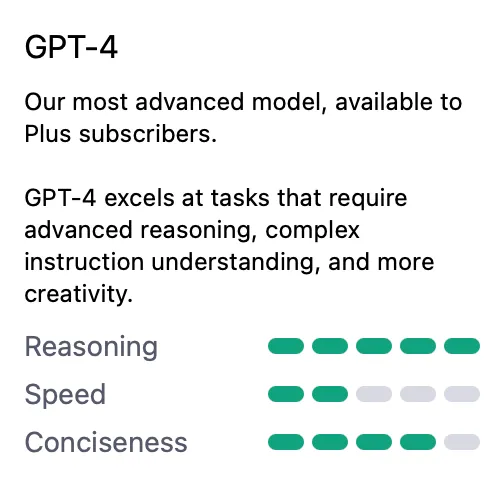

一个客观的好处是 GPT-4 API 接受上下文长度为8,192 个令牌(12.5 页文本)的请求——这是GPT-3.5 上下文长度的2 倍。

此外,与之前的模型相比,GPT-4 在推理和完成响应的简洁性方面表现出色。

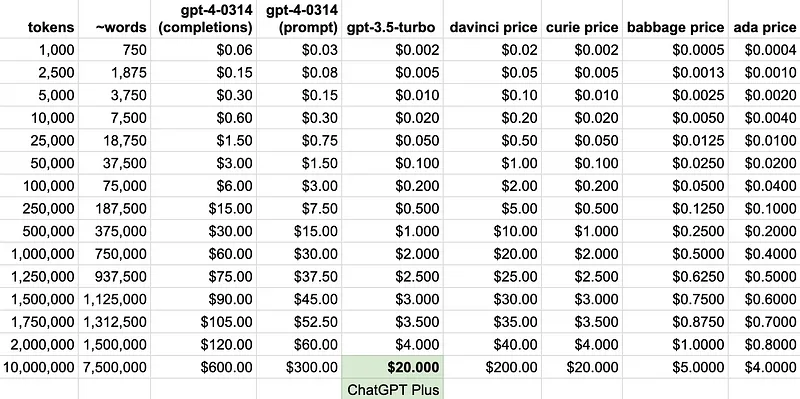

多少钱呐

在决定是否使用 GPT-4 API 时最困难的选择是定价—因为 GPT-4 定价的如下:

- 提示:每 750 个单词 0.03 美元(1k 代币)

- 完成:每 750 个单词 0.06 美元(1k 代币)

GPT-4 API比 ChatGPT 的默认模型 gpt-3.5-turbo 贵 14-29 倍

未来的改进

- 在发布的 GPT-4 API 中,它将是多模式的。在这种情况下,“多模型”是指 API 不仅可以接受文本,还可以接受图像的能力。

- 还有一个 32,768 上下文长度模型(50 页文本)目前处于预览状态—与 gpt-4-0314 相比,上下文长度增加了4 倍。然而,这将是8,192 上下文长度 GPT-4 模型成本的2 倍。

- 虽然在 GPT-3 API 是微调模型(davinci、curie、babbage 和 ada)中可用,但预计将在未来版本中我们也可以在 GPT-4 API 上微调。

- 目前的训练数据只到**2021 年 9 月。**这也有望在未来的版本中增加最新数据,以及联网测试,当然公布的插件还无法使用。

- 当通过 ChatGPT Plus 使用 GPT-4 时,截至今天:“GPT-4 目前每 3 小时有 25 条消息的上限**。**随着我们根据需求进行调整,预计上限会大大降低。” 随着此上限的降低,预计直接 GPT-4 API 的使用会增加。